About Me

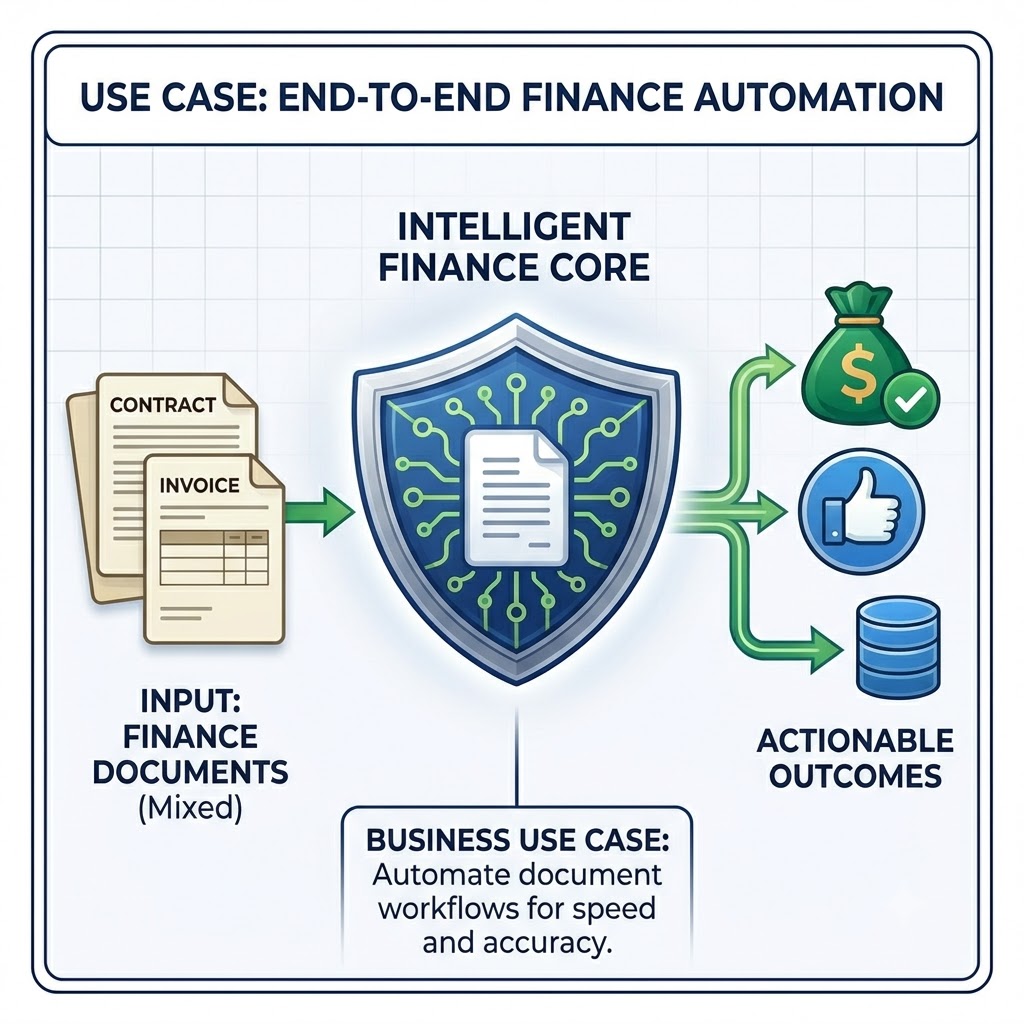

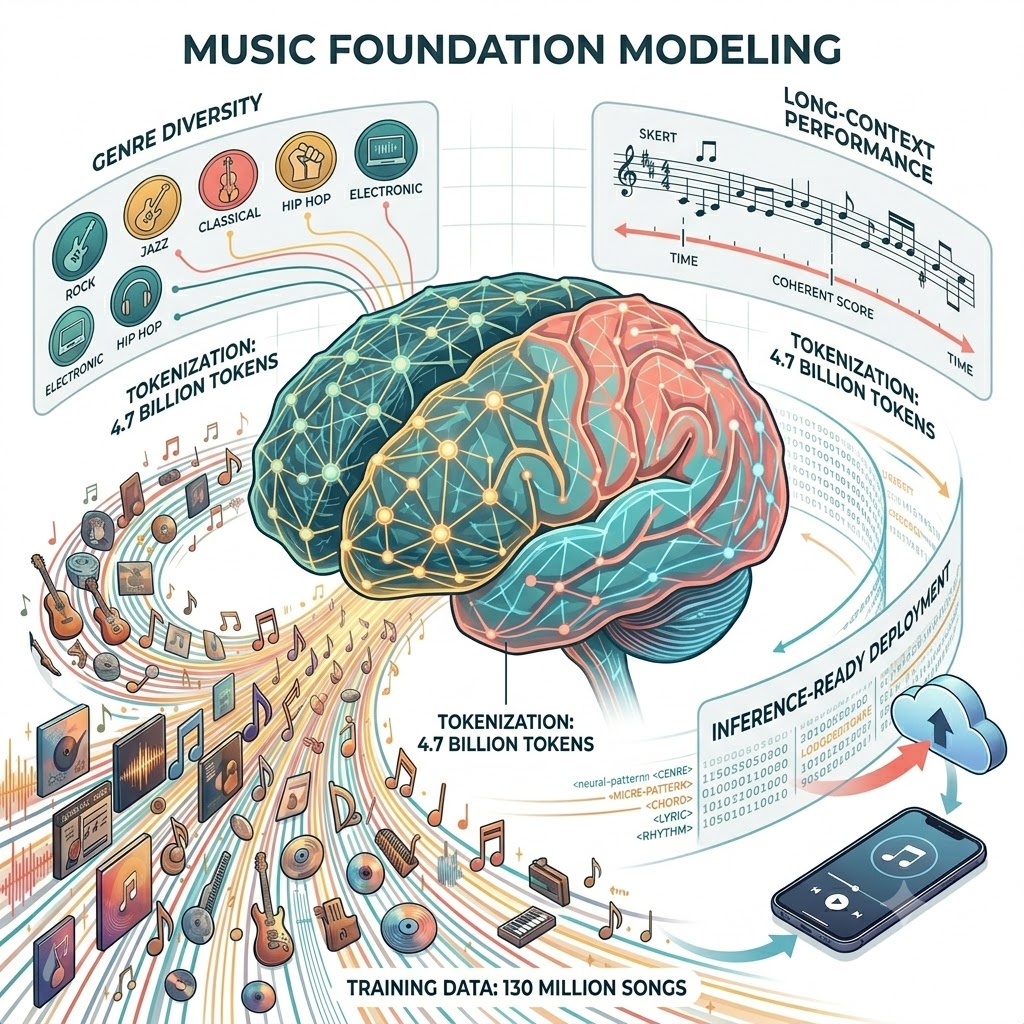

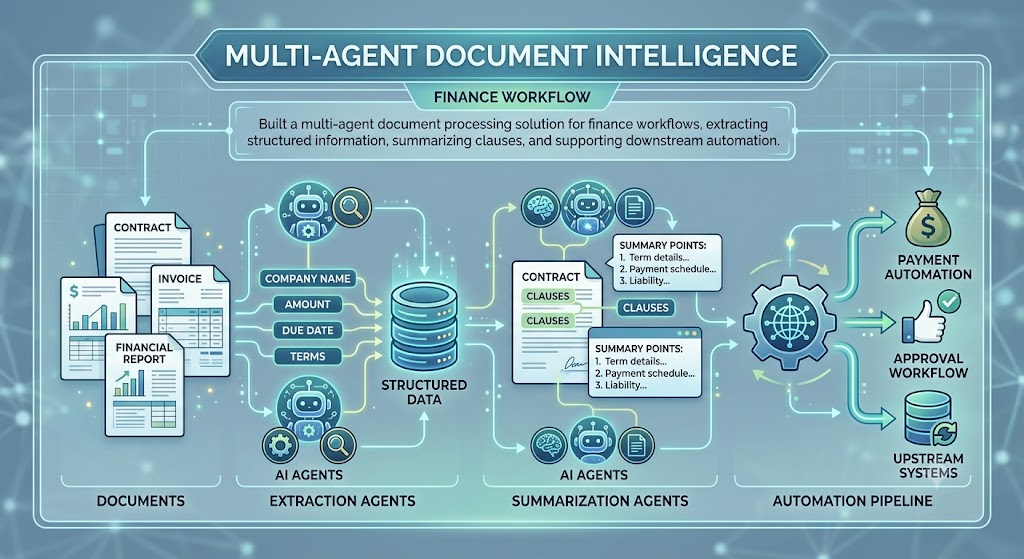

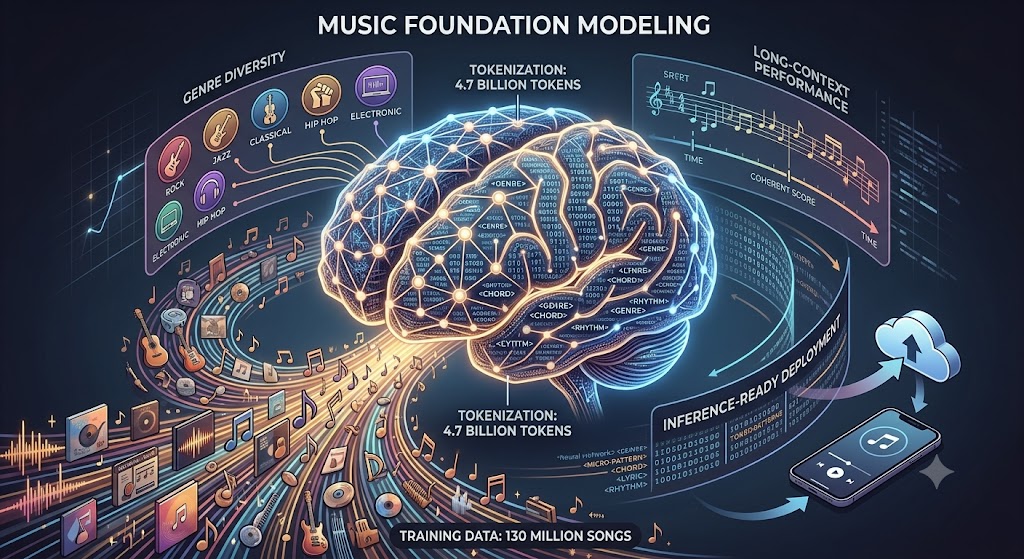

I am a Senior Applied AI/ML Scientist with 6+ years of experience delivering production AI products across finance, telecom, and music. My recent work includes building multi-agent document intelligence, hybrid visual-lingual signature authentication, and scalable music foundation models with extended context.

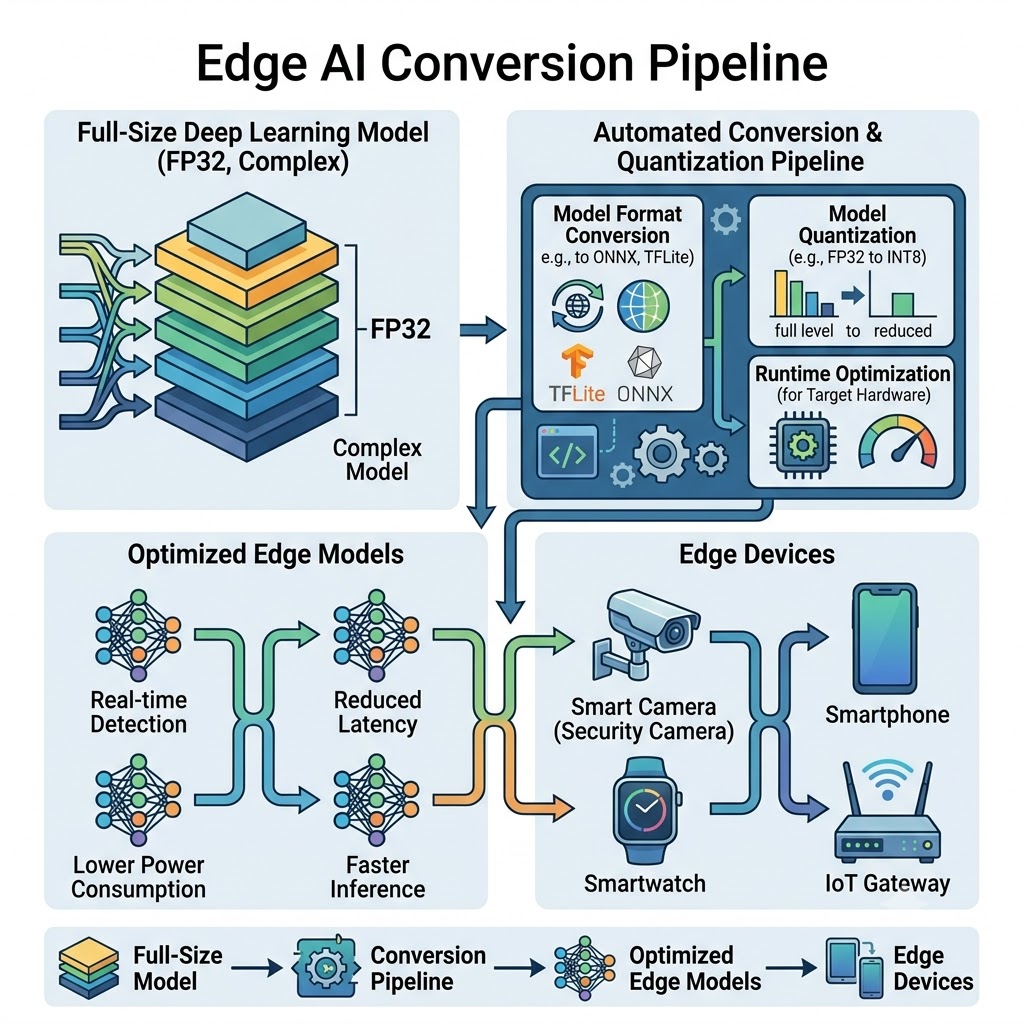

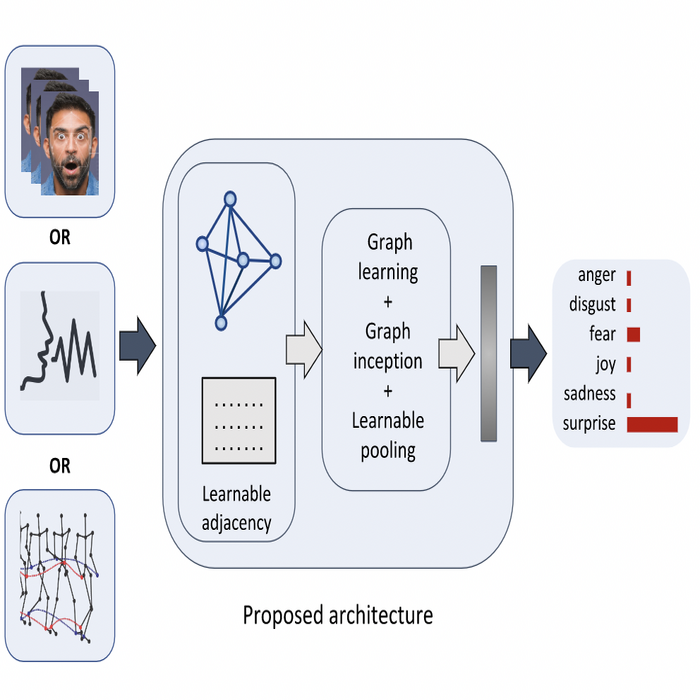

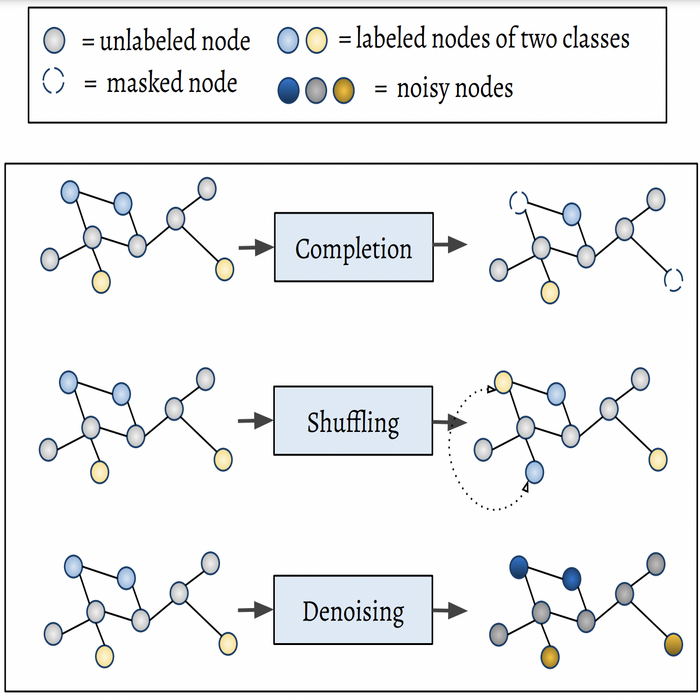

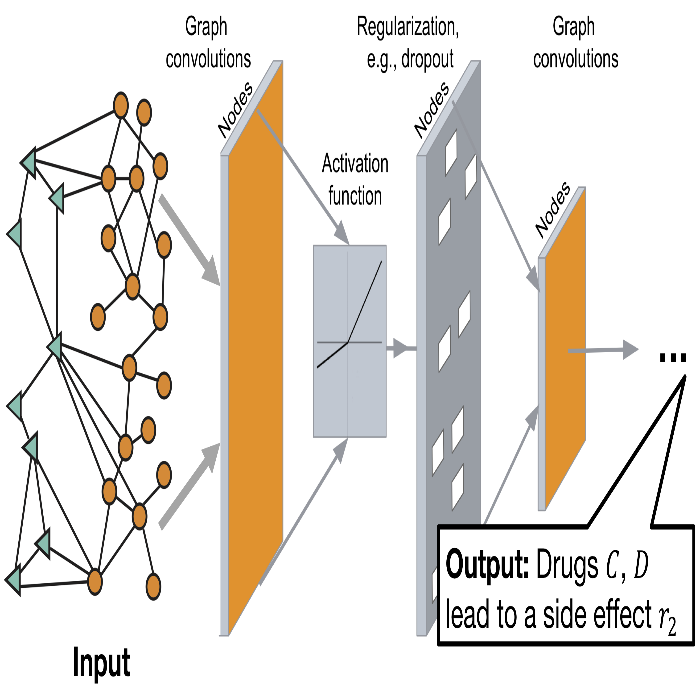

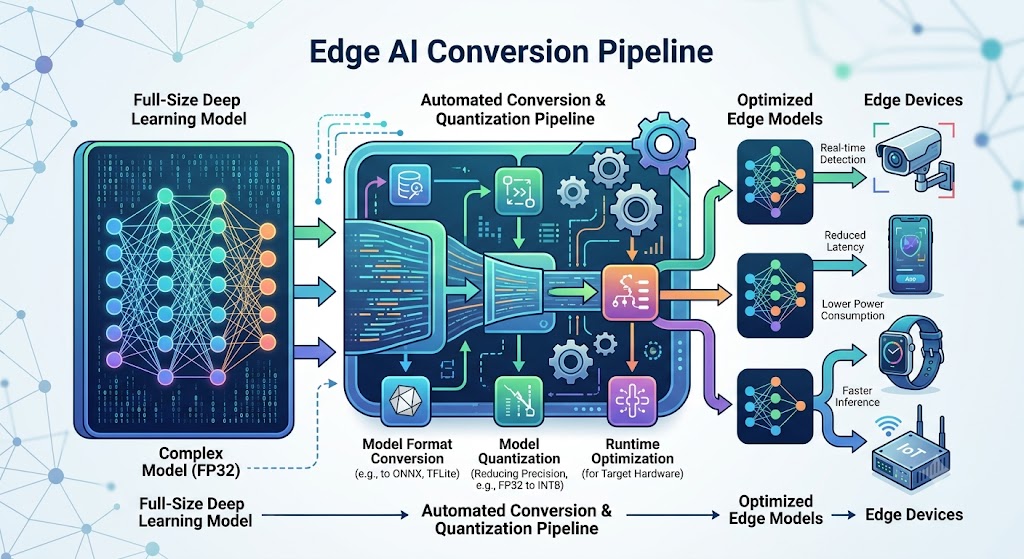

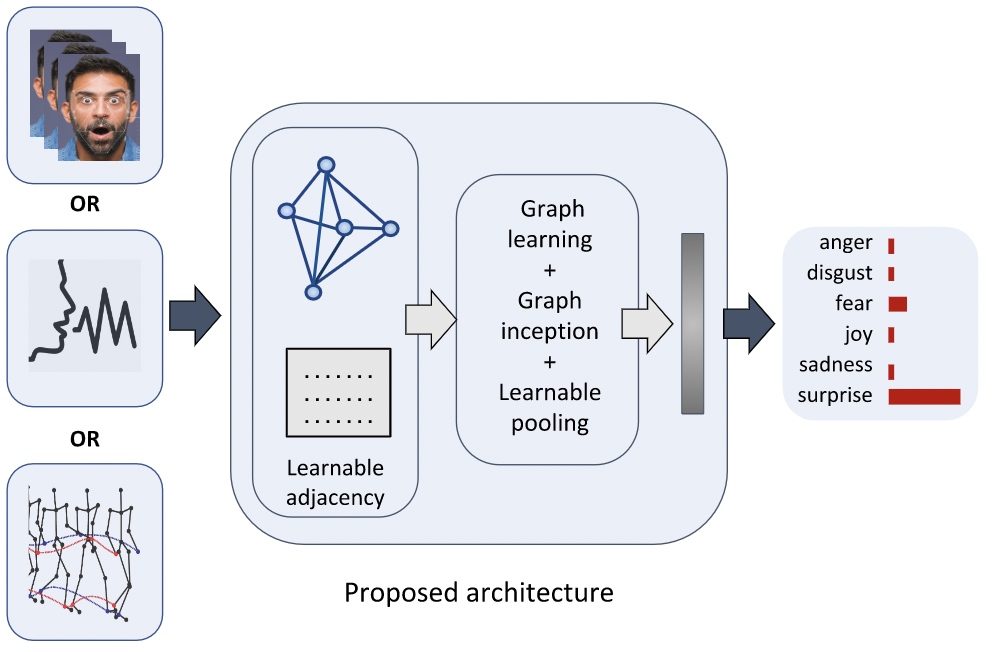

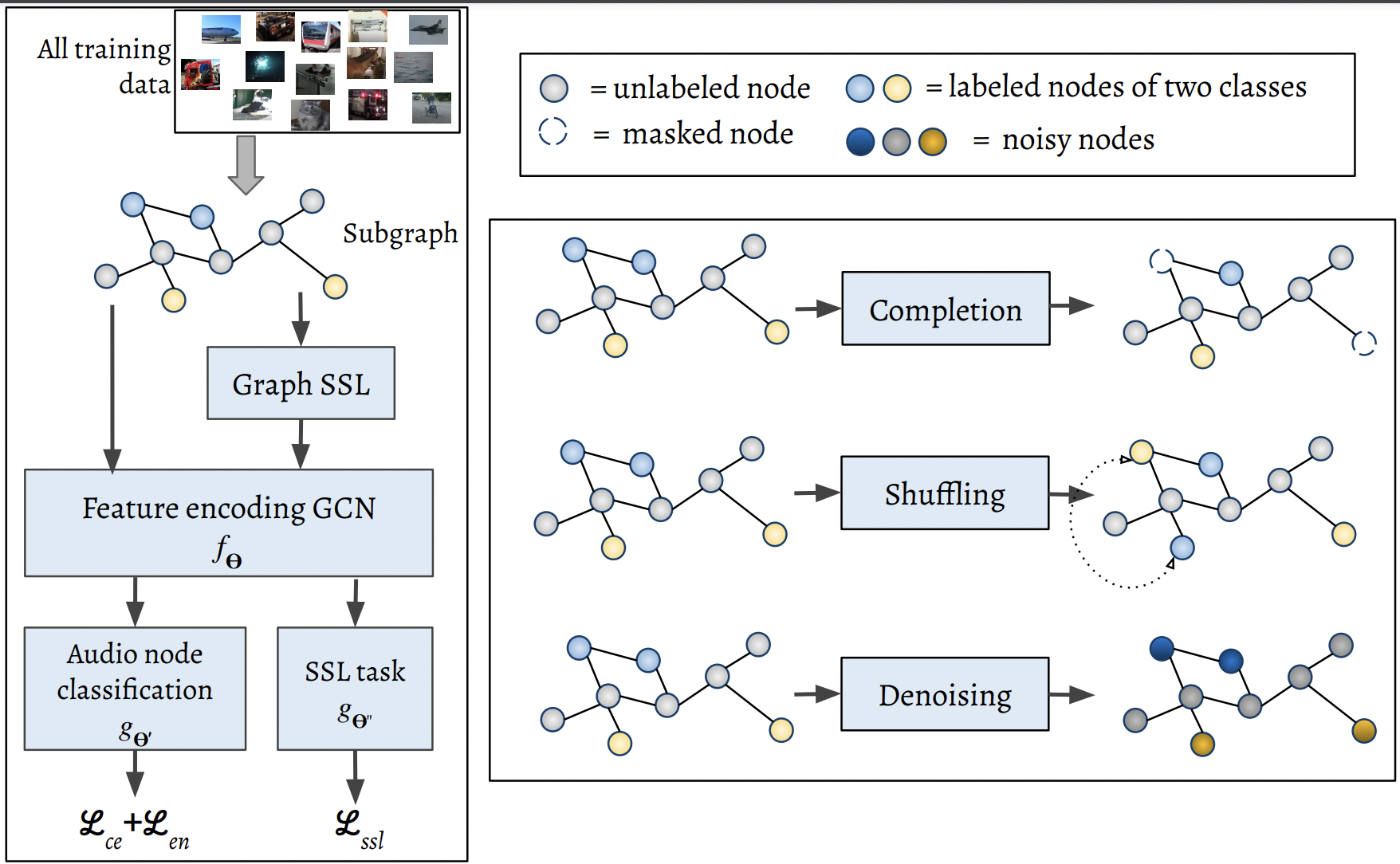

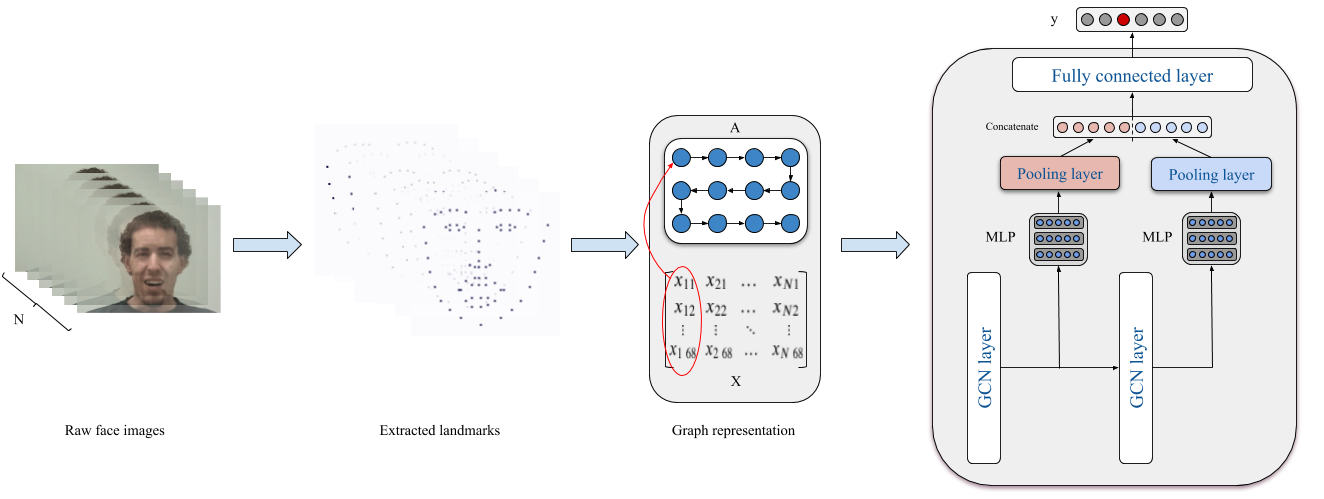

I turn research into products by combining deep learning, graph-based reasoning, and large-language-model engineering. I enjoy building robust MLOps pipelines, deploying inference-ready systems, and helping teams adopt modern AI tooling.

I hold a PhD in Computer Science from the University of Warwick and collaborate across industry and research to solve hard AI challenges with practical impact.

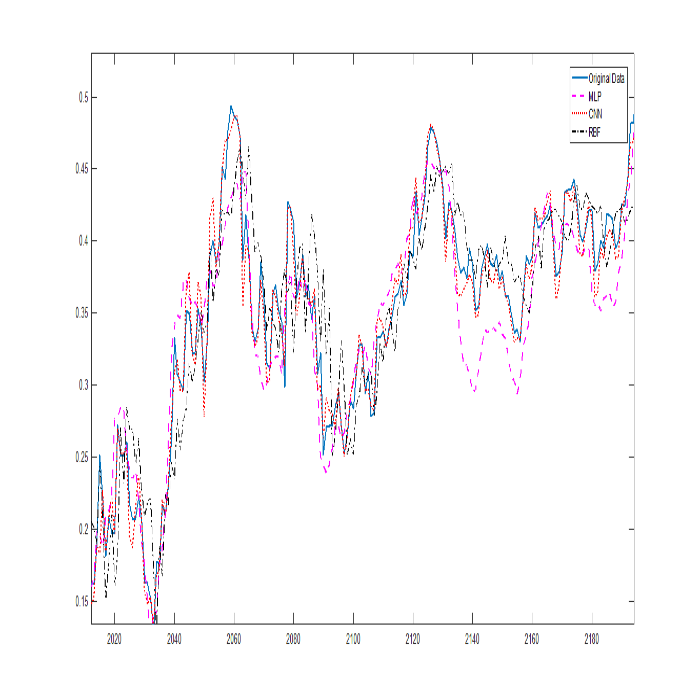

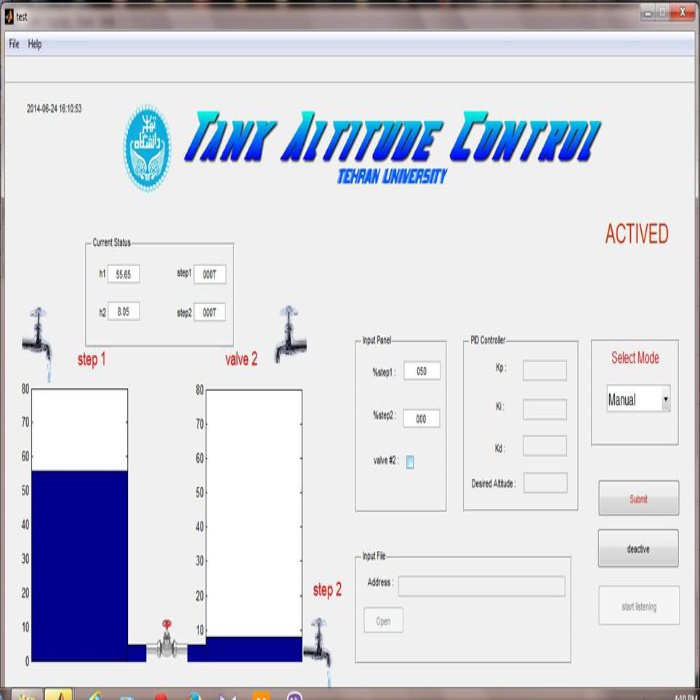

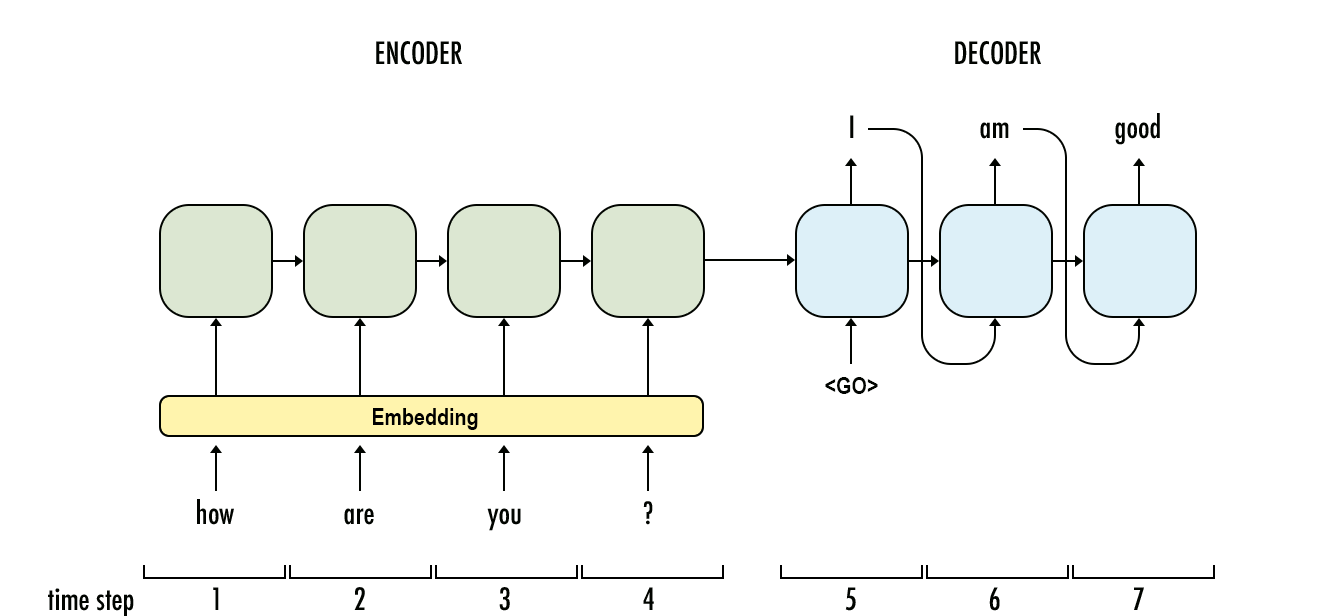

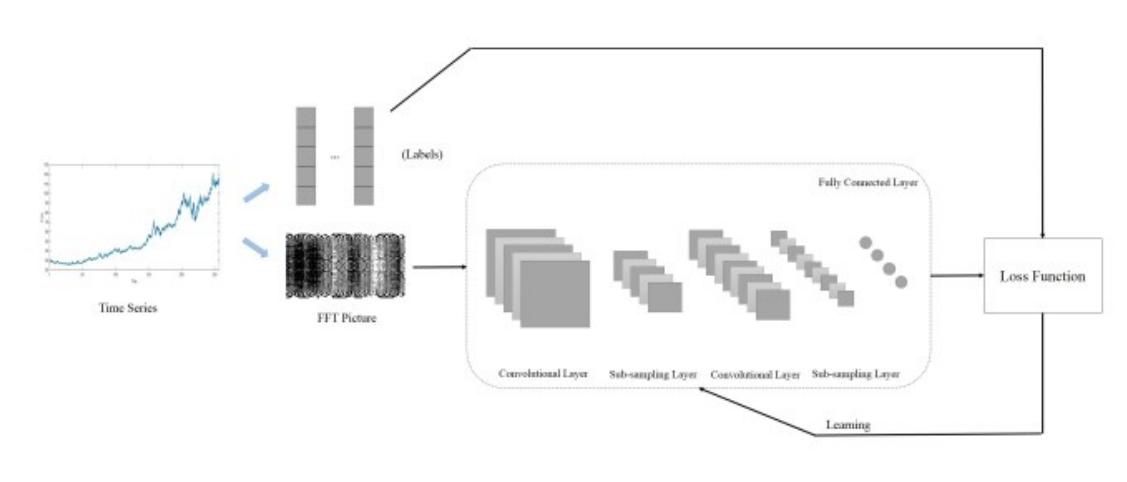

Prior to Warwick, I studied MSC and BSC at the University of Tehran in the ECE Department, Iran. In my master's program, I worked on using deep learning models to predict stock prices. Throughout my master’s, I implemented and designed many deep learning models for different tasks.

I received my diploma in Physics and Mathematics from Shahid Beheshti, under the supervision of NODET (National Organization for Developing Exceptional Talents).

I was born in a beautiful city, Shahrekord in Iran (you can see some photos here). I’ve spent 25 years of my life in Iran, which has imparted great skills and memories.